Advanced Prompt Optimization: How to Tune Your Prompts for Perfection

Prompt optimization is the process of refining a prompt to improve its reliability, accuracy, and efficiency. When you are building AI applications or relying on AI for critical workflows, "good enough" isn't enough. You need consistent, high-quality outputs that don't break the bank.

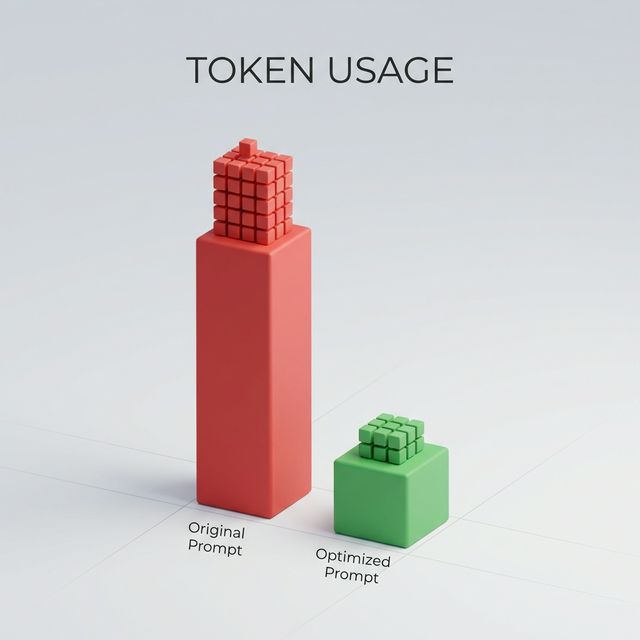

In production environments, a 10% reduction in token usage can save thousands of dollars. Here are the techniques prompt engineers use to optimize their inputs.

1. Delimiters are Your Best Friend

When pasting text for the AI to process, use delimiters to clearly separate instructions from data. This prevents "prompt injection" confusion where the AI might mistake data for instructions.

Common Delimiters:

- Triple quotes:

""" - Triple backticks: ```

- XML tags:

<text></text> - Dashes:

---

Example:

Summarize the text delimited by triple quotes.

""" [Insert Text Here] """

2. Reduce "Fluff" (Token Optimization)

LLMs have context windows (limits on how much text they can remember) and bill by the token. Verbose prompts waste money and can confuse the model. Be direct.

The "Politeness" Tax

You don't need to say "please" or "if you could".

- Verbose (40 tokens): "I was wondering if you could possibly take a look at this list and maybe try to sort it alphabetically if it's not too much trouble?"

- Optimized (6 tokens): "Sort following list alphabetically."

Result: Same output, 85% cost reduction.

3. The "Negative Prompt" Strategy

Sometimes it is easier to say what you don't want. This is standard in image generation (Midjourney, Stable Diffusion) but works for text too.

"Write a product description. Negative Constraints: Do not use sales clichés like 'game-changer' or 'disruptive'. Do not use exclamation marks. Do not mention price."

4. Divide and Conquer (Chain Prompting)

If a task is complex, the model is more likely to hallucinate or miss steps. Break the task into a chain of smaller prompts.

Instead of:

"Read this report, summarize it, translate it to Spanish, and then write a formatted email sending it to my boss."

Do (Prompt Chaining):

- Prompt A: "Summarize this report."

- Prompt B: "Translate the summary above into Spanish."

- Prompt C: "Draft an email to my boss including the Spanish summary."

This allows you to verify the output at each stage.

5. Self-Consistency and Reflection

Ask the model to critique its own work before finalizing it. Validating the output within the conversation can catch errors.

Prompt Pattern:

"Generate the code for [Task]. Then, review your code for security vulnerabilities. If you find any, fix them and output the final secure code."

6. Specify the Output Structure (JSON/XML)

For developers integrating LLMs into apps, structured output is non-negotiable.

Prompt:

"Extract the names and email addresses from the text. Return the result strictly as a JSON array of objects with keys 'name' and 'email'. Do not include any other text."

Code Example:

[

{ "name": "Alice Smith", "email": "alice@example.com" },

{ "name": "Bob Jones", "email": "bob@example.com" }

]

Testing Your Prompts

Optimization requires testing. Run your prompt 5-10 times.

- Does it fail 2 out of 10 times?

- Is the tone consistent?

- Does it ever cut off?

If it fails, identify the ambiguity in your instruction and patch it.

Conclusion

Prompt optimization is an iterative cycle: Draft -> Test -> Refine -> Repeat. By using delimiters, being concise, and breaking down complex tasks, you can turn a fragile prompt into a robust tool.

Ready to optimize your prompts?

Use Promptnia's advanced generator to create the perfect prompt for your needs.

Go to Generator